In the fast-moving world of AI, few tools have attracted as much attention, and concern, as ClawdBot, the open-source autonomous AI agent that went viral at the start of 2026. Originally created by Peter Steinberger, a seasoned software developer and founder of PSPDFKit, ClawdBot was designed to be a self-hosted assistant that runs persistently on user systems and actually does things, not just chats.

But the very features that drove its rapid adoption have also made it one of the most dangerous and misunderstood AI projects to hit the community recently. The combination of deep system access, insecure deployments, rebranding chaos, and aggressive third-party exploitation now makes ClawdBot, renamed Moltbot after a trademark request from Anthropic, a risk most organizations should avoid entirely.

What Is ClawdBot (Now Moltbot)?

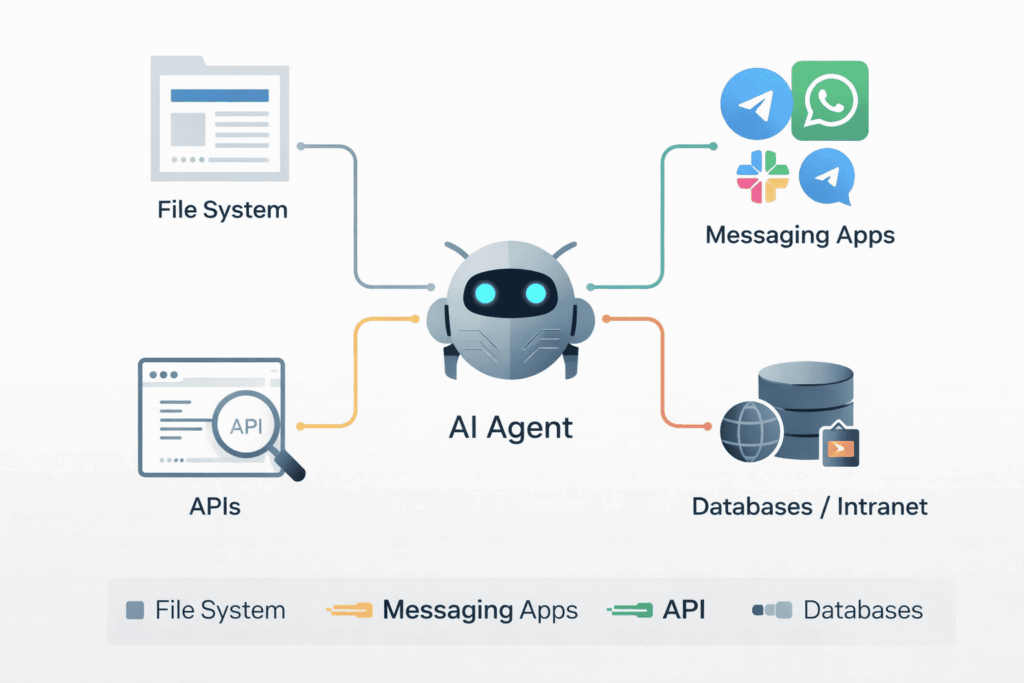

ClawdBot is an open-source AI agent framework that runs on devices you control — laptops, servers, or virtual machines. Unlike cloud-hosted chatbots, it:

-

Persists in the background continuously

-

Accesses local systems and user services

-

Integrates with messaging platforms like Telegram, WhatsApp, Slack, and iMessage

-

Executes tasks such as managing calendars, sending emails, executing scripts, or interacting with APIs

This agentic design blurs the line between assistant and operator. It behaves like a software agent with “hands and eyes” in your system rather than a query-response chatbot.

What Actually Went Wrong

The current turmoil around ClawdBot/Moltbot is not the result of a single centralized breach, nor is it a corporate product failure. Instead, multiple forces converged:

-

Viral adoption outpaced security — Thousands of users deployed ClawdBot with little understanding of permissions, network exposure, or credential management.

-

Rebranding chaos — After a trademark request from Anthropic over its original name, ClawdBot was renamed Moltbot. During this transition, social media handles and repositories were briefly hijacked, and scammers exploited confusion with fake tokens.

-

Exposed instances and keys — Security researchers and journalists have found unsecured control panels and exposed API keys in the wild, creating real opportunities for unauthorized access and data leakage.

-

Malicious imitations — Fake extensions and clones purporting to be ClawdBot/Moltbot have appeared on software marketplaces that drop malware on hosts.

These issues are not theoretical. They reflect the actual state of the ecosystem surrounding this agentic AI tool.

Why ClawdBot Is Especially Dangerous

The danger of ClawdBot is not simply that it can give bad answers. A misplaced prompt or hallucinated message is trivial compared to what this software can do:

-

System-level access — It can read and write files, execute shell commands, access credentials, and interact with connected services.

-

Persistent presence — Once running, it may continue acting autonomously unless explicitly stopped.

-

Network exposure — Poorly configured deployments can expose control interfaces on the public internet.

-

Credential leakage — Exposed API keys or tokens can grant attackers access to connected services.

In other words, if you don’t know exactly what you’re doing, deploying ClawdBot is like giving a powerful automation engine full privileges on your systems without supervision.

Real-World Business Impact

For professional environments, the consequences can be severe:

-

Security compromise — Unauthorized access to internal networks, customer data, or system credentials.

-

Compliance violations — Data exposure may violate regulatory requirements (HIPAA, GDPR, CCPA).

-

Reputational harm — Automated actions gone wrong can damage trust with clients and partners.

-

Operational disruption — Autonomous agents with broad access can inadvertently interfere with production systems.

These are not edge cases. Independent reporting suggests hundreds of exposed Moltbot panels and significant security lapses in active deployments.

What You Should Do Instead

Right now, ClawdBot/Moltbot is best understood as a developer experiment, not a deployable enterprise solution. If your goal is to evaluate AI automation responsibly:

-

Use tools with bounded privileges and clear audit trails.

-

Favor managed platforms with enterprise security defaults.

-

Ensure human-in-the-loop controls for any autonomous actions.

-

Treat AI agents like infrastructure, not apps.

For most organizations, existing AI services with proper governance are safer than experimenting with agentic software that operates with deep system access.

Conclusion

ClawdBot (now Moltbot) is a fascinating glimpse into what AI assistants could become. But the speculation outstrips the reality. Today, it represents real security, operational, and governance risks, not a plug-and-play productivity boost.

This is not about fear. It’s about engineering discipline. When power outruns control, the result is not progress — it’s exposure. For most professionals and businesses, the correct move is to wait, learn, and prepare before adopting autonomous AI agents.

Let ClawdBot’s cautionary rise serve as a sober lesson in responsible innovation.

How we can help:

CRES Technology ensures to keep your network and data protected so that you can feel secure and confident.

Many of our clients were in danger of becoming a victims of cybersecurity attacks. They needed an IT security to help prevent attacks from ever happening and help them recover if an attack did happen. That’s where CRES Cybersecurity comes in.

With our extensive capabilities in cybersecurity and partnership with top cybersecurity software companies, we enable you to prevent cyber attacks, network exploitation, data breaches, phishing emails, and more. Our RMM audit assesses the health of your network and resources. We offer network penetration testing to prevent network exploitation, implement data loss prevention policies to prevent data breaches, and phishing email testing to teach your staff to identify phishing emails. CRES Technology implements state-of-the-art Endpoint Detection & Response solutions, allowing your company to be able to recover from any kind of damage caused by cybercriminals.

About Irfan Butt

CRES Technology – Founder and CEO

A strategic leader with over twenty years of progressive experience in Business Administration, Finance, Product Development, and Project Management. Irfan has a proven track record in a broad range of industries, including hospitality, real estate, banking, finance, and management consulting.